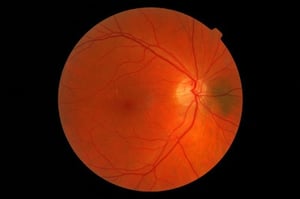

The far majority of clinical trials in diabetes exclude patients with active retinal disease, as interventions that lower glucose rapidly can temporarily worsen retinopathy. This was originally shown in type 1 diabetes [1] but more recently also in type 2 diabetes [2, 3]. Screening for diabetic retinopathy before inclusion in a clinical trial is relatively cumbersome, also because it often involves a separate visit to an ophthalmologist. The use of a fundus camera with offline interpretation by an ophthalmologist has gained widespread use in clinical practice, but not so much in the field of clinical trials.

In this blog I will briefly describe four recent studies on artificial intelligence approaches to automate the interpretation of retinal images. I will conclude with an outlook on how this may facilitate the screening of potential trial participants for diabetic retinopathy. But first a brief introduction to deep learning, the methodology applied in all these papers.

Figure 1: Artificial Intelligence [Designed by starline / Freepik]

Figure 1: Artificial Intelligence [Designed by starline / Freepik]

From Wikipedia, deep learning is a form of machine learning, where computers employ statistical techniques to improve the performance of a certain task. An artificial neural network is a learning algorithm that models complex relationships between inputs and outputs. Deep learning is a specific form of such an algorithm that focuses on hidden layers in an artificial neural network. Successful applications include for example speech recognition. Deep learning can be unsupervised, that means unlabeled data can be used without any input required from the programmer. A specific type of neural network optimized for image classification is called a deep convolutional neural network. All the papers described below developed a deep learning algorithm using one or more learning datasets of retinal images, and quantified the performance of the algorithm in one or more validation datasets, often as compared to a panel of experienced ophthalmologists.

Gulshan et al. [4] reported an area under the receiver operating curve (ROC) of 0.991 for referable diabetic retinopathy, that is a retinal condition that needs referral to an ophtalmologist. Using an operating point selected for high sensitivity, the sensitivity was around 97% and the specificity was almost 94%.

Ting et al. [5] reported slightly less convincing results in a multiethnic population from Singapore. Again using referable diabetic retinopathy as the major criterion, AUC was 0.936, sensitivity was 90.5% and the specificity was 91.6%.

Rather than focusing on retinopathy, Poplin et al. [6] used machine learning on retinal photographs to quantify cardiovascular risk factors. Age could be predicted with a mean absolute error within 3.26 years, blood pressure with a margin of 11.23 mmHg, gender with an AUC of 0.97 and previous Major Adverse Cardiovascular Events (MACE) with an AUC of 0.70. Of course most of these parameters can be defined in an easier way than taking a fundus photograph, but this surprising approach shows the power of deep learning algorithms.

In the last paper [7], optical coherence tomography (OCT) scans rather than retinal photographs were analyzed. The algorithm described in this paper performed as good as four retina specialists who had access to patient notes and fundus images in addition to their OCT images when it came to make a referral recommendation.

In the last paper [7], optical coherence tomography (OCT) scans rather than retinal photographs were analyzed. The algorithm described in this paper performed as good as four retina specialists who had access to patient notes and fundus images in addition to their OCT images when it came to make a referral recommendation.

How might all this alter the way we screen potential trial participants? On April 11, 2018 the FDA approved IDx-DR, a commercial software package to be used on top of a Topcon NW400 retinal camera [8, 9]. Retinal images are uploaded to the cloud, and the software provides one of two results: “more than mild diabetic retinopathy detected: refer to an eye care professional” or [2] “negative for more than mild diabetic retinopathy; rescreen in 12 months.” For this outcome, a sensitivity of 87.2 % and a specificity of 90.7% were reported [10]. One can easily imagine that with slight alterations this can be used for assessment of a retina related exclusion criterion in clinical trials. This would result in less burden for trial participants, clinical centers and ophthalmologists and also improve source data quality, allowing for further analysis as indicated. In the last paper [7], optical coherence tomography (OCT) scans rather than retinal photographs were analyzed. The algorithm described in this paper performed as good as four retina specialists who had access to patient notes and fundus images in addition to their OCT images when it came to make a referral recommendation.